Meta has officially declined to sign the European Union’s new Code of Practice for general-purpose AI models.

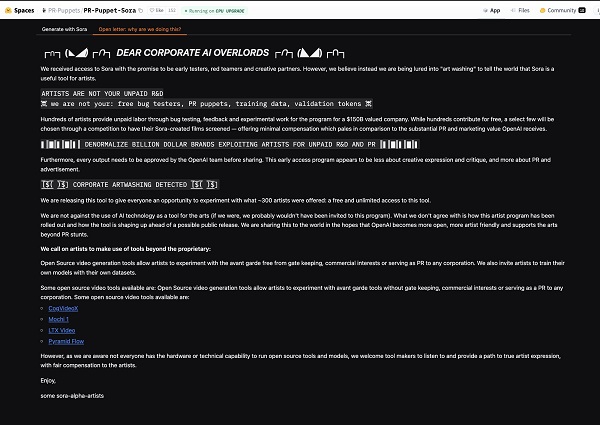

In a statement posted on LinkedIn, Joel Kaplan, Meta’s Chief Global Affairs Officer, said the company has decided against signing the voluntary code after careful review. He criticized the framework as overly broad and full of legal uncertainties that could hinder innovation in the AI space.

Joel Kaplan’s Statement

Credit: Joel Kaplan (LinkedIn)

The EU introduced the Code of Practice earlier this month as a guide for companies to prepare for the new AI Act. It asks developers of general-purpose models to:

- Document how their systems are trained, including providing summaries of training data

- Embed safety, security, and transparency safeguards

- Respect copyright by avoiding the use of pirated content and honouring creators’ opt-out requests

- Respond to creators who explicitly don’t want their work included in training datasets

The code is voluntary and is intended to ease companies into the stricter obligations that will soon become law.

Meta, however, sees the move as regulatory overreach. The company argues the code could slow down the development of powerful AI models in Europe and make it harder for local businesses to build on top of them. “Europe is heading down the wrong path on AI,” Kaplan wrote.

The AI Act itself takes a risk-based approach. It bans certain uses of AI, such as social scoring or manipulative behavioural targeting, and places tight controls on high-risk applications such as biometric surveillance, education, and employment tools. Developers of general-purpose AI systems, including OpenAI, Google, Anthropic, and Meta, are required to meet specific transparency and risk management requirements by August 2, 2027.

Meta’s public rejection of the voluntary code highlights the growing tension between large tech firms and EU lawmakers over how to balance innovation with responsible regulation.